Response to Meta's Account Disabling Policies Under Oversight Board Review

By: Theodora Skeadas, Leah Ferentinos, Osiris Parikh, Glenn Ellingson, Suzi Ragheb

Integrity Institute members weigh in on the Oversight Board's public comment process: Board to Review for First Time Meta Approach to Disabling Accounts

How best to ensure due process and fairness for people whose accounts are penalized or permanently disabled.

The average user views platforms as powerful global companies capable of influencing culture and moving markets, yet feels powerless when subjected to opaque, unexplained enforcement decisions. In their quest to create seamless user experiences, platforms have become faceless bureaucracies, and this asymmetry breeds resentment.

Users expect transparency and fairness when facing deactivation, but instead encounter algorithmic judgment without recourse or explanation. The perceived arbitrariness (being banned without knowing why, or watching others violate rules with impunity) erodes trust and reinforces the sense that these companies wield immense power over digital life while offering users no voice in return. Addressing this public perception is what guides the recommendation for more transparency and clarity for users.

Many existing best practices around content removals are relevant to account penalties as well. Existing policies can be extended to encompass situations like these. In general, people view account-level interventions as more serious or threatening than content-level interventions and permanent sanction as more serious than temporary sanction, so account bans would be times to provide the highest possible levels of procedural justice that are practical.

Ideally, permanent bans are applied to established, human-administered accounts only through a process requiring mandatory human review, alongside a clearly defined and time-bound appeals process. (The "established, human-administered" qualification in the previous sentence acknowledges the imbalance of effort between account creation and human-administered review and appeals processes; the former can often be scripted and done hundreds of times per second by one human; the latter is quite expensive. Account holders "earn" the right to procedural justice through the investment of presumed-human effort, and platforms may need to bulk-apply sanctions to cope with automation in the hands of bad actors.)

And acknowledging that the most just process will not be the most swift process, temporary account restrictions during the review and appeals process for a permanent sanction should be considered carefully balancing the rights of, and risks to, all parties.

Leverage a trusted flagger program for activists, human rights- and journalism-related account deactivations, to ensure their accounts and cases are priorities for (human) review, and to prioritize local, in-culture context on these cases.

Systemic due process protections that extend beyond high-profile or exceptional cases represents one existing gap. Many ordinary users are permanently disabled by automated systems without a clear explanation, evidentiary basis, or meaningful opportunity for human appeal. For most users whose accounts are disabled there is little to no explanation of the decision and generally no direct access to human review. That absence of procedural fairness directly impacts the platform's broader safety regime. Procedural fairness can correct this imbalance.

The effectiveness of measures used by social media platforms to protect public figures and journalists from accounts engaged in repeated abuse and threats of violence, particularly against women in the public eye.

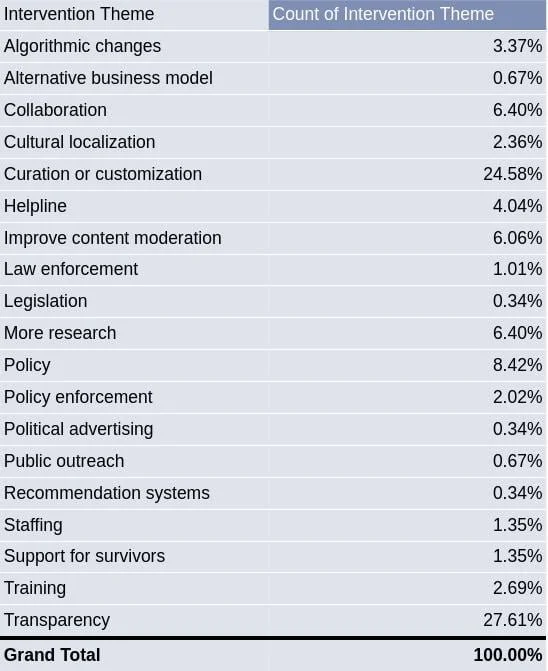

In 2023, the National Democratic Institute analyzed 297 civil society–generated reports on platform interventions to protect women in the public eye from online abuse (read more here). The analysis found that the most requested interventions from civil society organizations for technology platforms to better protect women online were around transparency (28%), curation or customization (25%), and policy (8%). The full set of intervention themes is detailed below, with additional summary statistics available here.

Within transparency-related interventions, reporting is the most common sub-theme (25%), followed by information-sharing (20%) and appeals (10%). Transparency-related interventions emphasize data-sharing on the scale of reported gendered abuse and on how platforms process, adjudicate, and resolve those reports, including appeals. This also includes transparency and data sharing around the appeals process. An example includes measuring the prevalence of gendered abuse and sharing data through corporate transparency reports.

Within customization-focused interventions, safety tools are the most frequently cited sub-theme (17%), followed by safety-by-design approaches (10%). Customization-related interventions refer to the process of selecting, organizing, and presenting content or information to users in a meaningful way, enabling user exposure to certain online content beyond that which has occurred from a service's basic features. This includes safety tools, safety by design, filtering, navigation, notifications, and settings. Two examples include (1) account recovery systems for survivors of abuse who have been hacked or locked out of their accounts, and (2) proactive filtering tools that allow users to quarantine abusive content across feeds, replies, and direct messages in a centralized dashboard, potentially with support from trusted allies.

Policy-related interventions refer to the rules that govern a technology platform or service. Policies cover issues including platform integrity and authenticity, misinformation, child sexual abuse material, violent content, and hateful conduct. Two examples include (1) that policies and guidelines should be easily accessible and available in local languages and (2) clearly articulating policies that prohibit content that harasses or abuses someone on the basis of gender or race.

Research into the efficacy of punitive measures to shape online behaviors, and the efficacy of alternative or complementary interventions.

Actions to reduce harm can take many forms. Interventions (actions taken on specific content on accounts in response to observed behavior) are the enforcement side of the equation, where platforms must declare policies, identify violations, and apply sanctions, while considering procedural justice questions. These interventions are absolutely sometimes necessary.

However, platforms should not neglect product levers (structural decisions that reduce risk across all users, without singling specific content or users out as policy-violating). Product levers include algorithmic changes that bias feeds, recommendations, and discovery surfaces toward safer content; that reduce virality; or that gate access to sensitive features based on verification, time-on-platform, age, or other factors. While no product lever will stop all abuse, platforms have great ability to magnify or minimize the damage that individual actors can have short of identifying and ejecting users entirely. See pages 16-32 of the Integrity Institute's Best Practices on Elections Integrity, part 2 for one view of the interventions and product lever spaces and how they can complement each other.

With that, returning to interventions specifically:

There is strong evidence that removing violating content does change user behavior (reduce recidivism). This is not sufficient in all cases; some users will persist and/or be actively adversarial, but content sanctions can and generally should be applied before permanent account-level interventions are considered.

When escalating from content-level to account-level interventions platforms should strongly consider applying more limited interventions (e.g. "time outs" or feature restrictions such as access to livestreaming, monetization, or advertisement) before permanent deplatforming; these steps can give users clarity and incentive to change behavior before they reach a point of no return.

We recommend reviewing Yale Justice Collaboratory's work on The Procedural Justice Framework for Tech Professionals (pages 11 - 13), which shows some effects on recidivism and user perceptions of justice if a more procedural justice framework is used.

Good industry practices in transparency reporting on account enforcement decisions and related appeals.

Good industry practices in transparency reporting on account enforcement decisions and related appeals includes user education, including access to the content and policy basis, to give users the opportunity to fix behavior before deactivation, with warnings. The education itself should be generally understandable. See the Prosocial Design Network's guidance and evidence around intervention explanations. Temporary restrictions, as paired with these clear explanations, have the additional benefit of increased likelihood the user notices and engages with the content policy information before reaching the permanent suspension level (as compared to clear explanations provided without associated penalties, which are easier to ignore).

Further, this involves clearer communication around the strike system and severity, rationale for deactivation decisions, and the appeals processes.

All deactivated accounts should have access to a clear appeal process, ideally incorporating human review and an opportunity for users to provide contextual explanations. Information on this process is denoted in the Prosocial Design Network library.

Ideally, platforms would provide an interactive portal where active users can see their past cases and where deactivated users can see the status of their appeal.

Finally, internal documentation standards should ensure accountability and the ability for organizations to review and learn from the body of their enforcement decisions. Ideally, too, there would be external transparency on AI errors and decision reversal rates.

Absent these best practices and safeguards, “account integrity” risks devolving into opaque automation rather than a system of rights-respecting platform governance.